How to Integrate Claude Code into CI/CD Pipelines

You have AI, BI, and CI/CD. I'm going to give up technology and buy a farm with some EI-EI-O. - Ancient 6502 Proverb

Claude Code has a real problem with CI/CD automation. The issue? Claude Code is conversational. CI/CD is automated. How do you marry the two? In this Claude Code tutorial on CI/CD, I'll show you how to fix that, and more. Let's go.

There's one big contrast between how Claude Code works and CI/CD works. Claude Code is an interactive tool. It works based on the inputs it receives, and, as it has questions, it waits for more input. The CI/CD pipeline doesn't wait. It runs a job from one end to the other, only stopping when the job hangs and eventually times out. If you've ever used CI/CD to run your jobs, you know how frustrating it can be when it doesn't work quite as expected.

Which is why Claude Code has a solution to automated pipelines, and it's called the -p flag.

-p is Made for CI/CD

The -p flag called from claude (claude -p "your_prompt") runs Claude Code in non-interactive mode. Decisions are made without having to stop and ask questions or confirm anything. The reason why this works so well in CI/CD is because if Claude is anticipating a response because it runs interactively by default, the CI/CD job would time out while waiting for user input that's never going to come.

I think the best way to understand this is to just run this from the command line without having to even use CI/CD so you can see how this works with, and without, the -p flag.

If you want to set this up on your machine, just create a file called counter.py and paste the following code in it:

def count():

global _count = 0

_count += 1

return _count

def main():

result = count()

print(result)

if __name__ == "__main__":

main()

All this Python script is supposed to do is increment a count by one and print the result.

Example Without -p

Let's start with an example without the -p flag, which is the default Claude Code interactive mode. Run the following from the terminal in the same folder where you saved the Python script we created above: claude "review counter.py". This prompt asks Claude Code to review the counter.py file.

- You'll notice that a new Claude interactive session starts

- You may see that Claude asks a question and waits for a response, because it's in interactive mode

❯ review counter.py

● Let me explore the project first.

Read 1 file (ctrl+o to expand)

● Bash(git log --oneline -10 2>/dev/null || echo "Not a git repo or no commits")

⎿ Not a git repo or no commits

● Bash(git diff HEAD~1 --stat 2>/dev/null; git diff HEAD~1 2>/dev/null | head -500)

⎿ Running…

───────────────────────────────────────────────────────────────────────

Bash command

git diff HEAD~1 --stat 2>/dev/null; git diff HEAD~1 2>/dev/null | head -500

Check recent changes

Command contains output redirection (>) which could write to arbitrary files

Do you want to proceed?

❯ 1. Yes

2. No

Esc to cancel · Tab to amend · ctrl+e to explain

As you can see in the terminal output above, without using -p flag, user interaction was required before Claude Code could continue. If this was running in CI/CD, the job would time out because no one can respond to Claude Code's prompts in a pipeline job. The review of counter.py would never complete successfully.

But wait. When you ran claude "review counter.py", you may not have been prompted with any questions. Why the difference?

This all goes back to the way AI is designed. Not every execution path is the same. The same tools aren't chosen for every execution. The discretion falls solely on Claude Code unless instructed otherwise. So executing this without the -p flag may run a dozen times without the need for interaction. So why do we need the -p flag?

Example With -p

The -p flag is for non-interactive mode in CI/CD. Let's try the same thing again, but this time using the -p flag. Run the following from the terminal in the same location where you stored the Python script we created above: claude -p "review counter.py"

Here's my review of `counter.py`:

**Bug — Line 2: Invalid Python syntax**

python

global _count = 0

`global` declarations cannot include assignment. This will raise a `SyntaxError` at import time. It needs to be split:

python

_count = 0

def count():

global _count

_count += 1

return _count

The `_count = 0` initialization must be at module level, and the `global _count` declaration inside the function should stand alone.

**That's the only issue.** The rest of the code (`main()`, the `__name__` guard) is fine. Want me to fix it?

See the difference? Claude Code was able to successfully review the counter.py script and actually found a bug (no actual bugs were harmed during the making of this script) all without user interaction.

Grab a Shovel and Let's Dig Deeper

For the purposes of the Claude Certified Architect exam, you just need to know that the -p flag is for non-interactive mode in CI/CD.

But, as with many things in life, it's not always black-and-white.

The official Anthropic Claude Code documentation says even when using the -p flag, Claude Code could still prompt you for instructions, which could make it not viable for automated pipelines in CI/CD. Typically these instruction prompts involve permissions.

There are a couple of other options, though.

Dangerous is My Middle Name

I don't recommend this approach, but I'm writing about it so you have the full picture. If your pipeline is still getting prompts for permissions-related issues, you can throw all caution to the wind and call claude with the --dangerously-skip-permissions flag. But don't do this. Don't ever run Claude Code with this flag unless you are absolutely, positively sure you know what you're doing. And then you should still reconsider that decision. Don't do it. Just don't. But if you do, you won't be prompted for permissions-related instructions.

Here's an example of the command that you should not run: claude -p "review counter.py" --dangerously-skip-permissions.

Auto is My Other Middle Name

The safer approach to --dangerously-skip-permissions is --permission-mode auto, which uses a classifier to review commands before they're executed. This blocks potentially dangerous actions while still allowing routine work to continue without prompts for information. When combined with the -p flag, auto mode will abort if the classifier continually blocks actions it has deemed potentially dangerous.

Here's an example that would be safer to run: claude -p "review counter.py" --permission-mode auto.

GitHub Actions

Earlier today, I created an account on GitHub for the purpose of setting up a CI/CD pipeline and teaching myself how to use the -p flag. Now I'm going to use it as an example for this article.

FYI, this isn't a tutorial on GitHub Actions, and I assume you know what they are. I'm going to just jump into it. You will need an Anthropic key set up in GitHub and some API credits ($5 USD is enough for a few runs) to perform this example on your own.

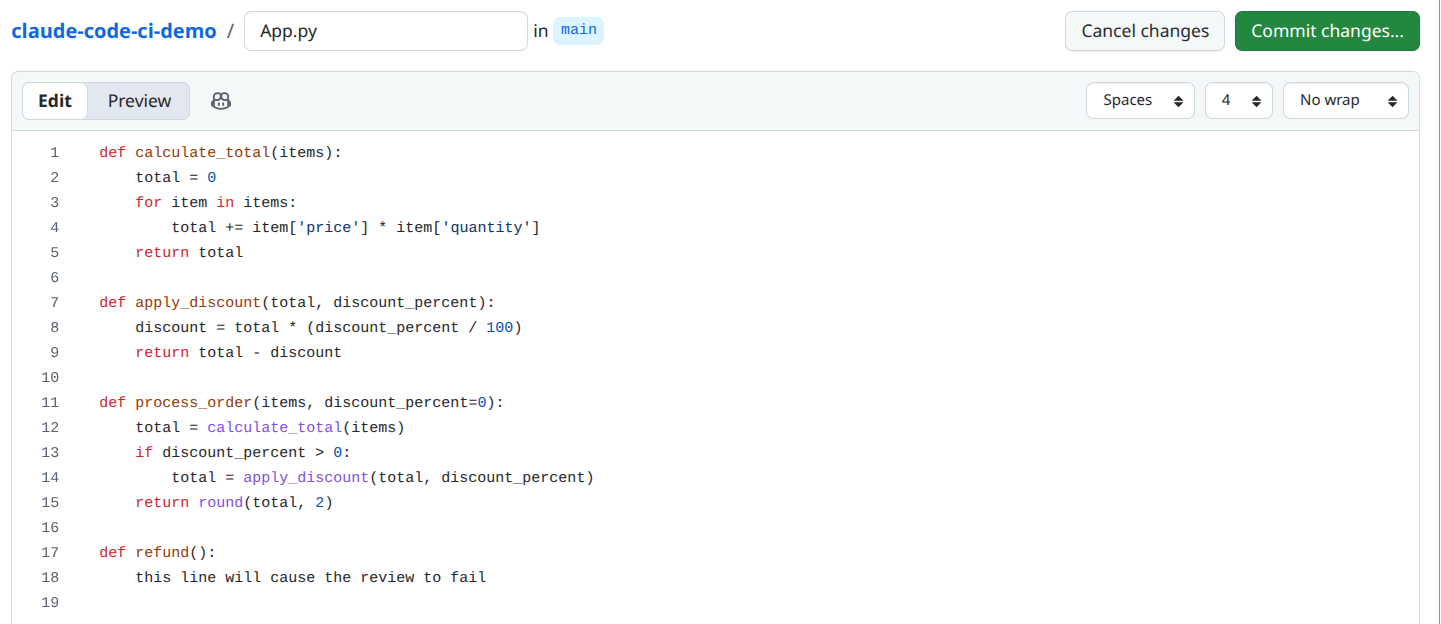

On GitHub, I have a repo with a file in it called App.py. If you want to follow along, create the same file in your GitHub repo and paste the contents below into it and commit the change.

def calculate_total(items):

total = 0

for item in items:

total += item['price'] * item['quantity']

return total

def apply_discount(total, discount_percent):

discount = total * (discount_percent / 100)

return total - discount

def process_order(items, discount_percent=0):

total = calculate_total(items)

if discount_percent > 0:

total = apply_discount(total, discount_percent)

return round(total, 2)

def apply_refund(total):

return total - total

Perfect. Now our code is committed to the repo. We need to do a couple of other short things to set this up and we'll be ready to examine GitHub Actions in depth.

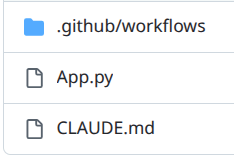

In your repo, add a new folder structure .github/workflows and place a file in it called claude-review.yml. This is a YAML file if you're not familiar with extension, and it's essentially a configuration file. YAML is the same language used in the frontmatter of many configuration files in Claude Code.

Inside the claude-review.yml file, paste this code:

name: Claude Code Review

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

jobs:

claude-review:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v4

- name: Install Node.js

uses: actions/setup-node@v4

with:

node-version: '20'

- name: Install Claude Code

run: npm install -g @anthropic-ai/claude-code

- name: Run Claude Code Review

env:

ANTHROPIC_API_KEY: ${{ secrets.ANTHROPIC_API_KEY }}

run: |

claude "Review the code in app.py. Check for bugs, missing input validation, and edge cases. Be specific about line numbers." --output-format json

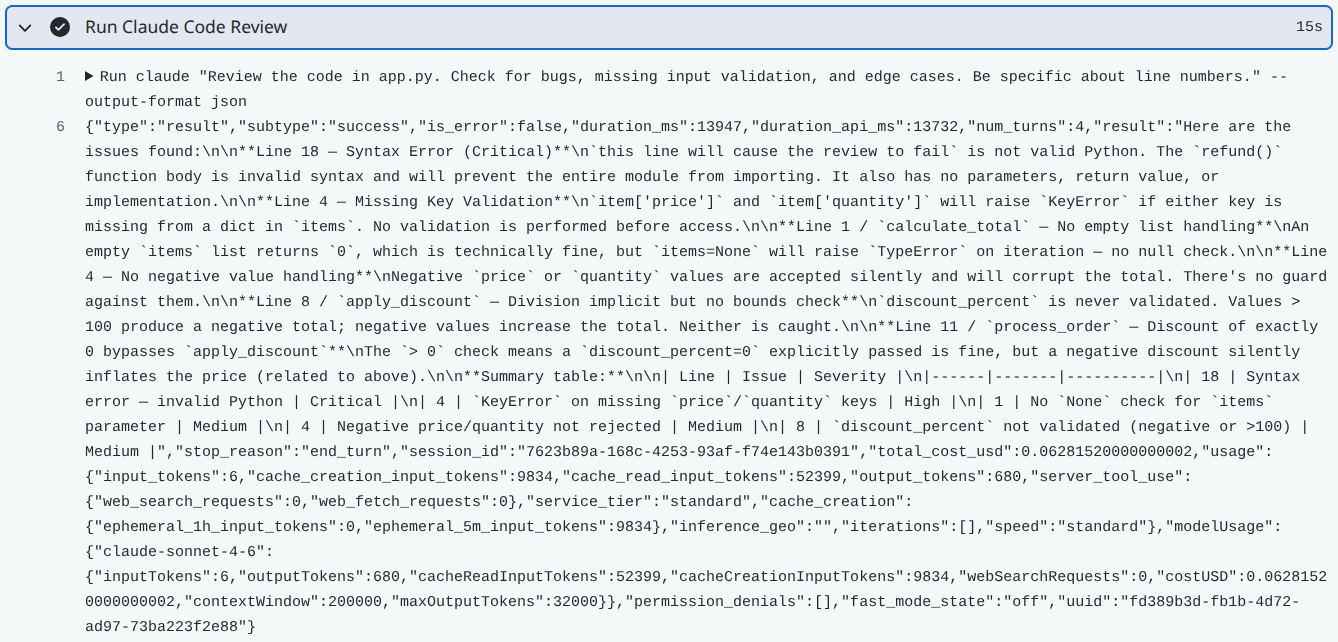

This YAML, without getting too far into the weeds, instructs GitHub to perform several coordinated actions on pushes to the main branch or on pull requests from the main branch. The action we're most concerned with is toward the bottom under - name: Run Claude Code Review. This action will review the code in the App.py file. The specific prompt for the action is claude "Review the code in app.py. Check for bugs, missing input validation, and edge cases. Be specific about line numbers." --output-format json.

Notice I did not use the -p flag.

And let's add a CLAUDE.md file to the project root. This file contains the guidelines Claude will use when performing the code review. You can paste in the code below:

# Claude Code CI Demo

## Project Context

This is a Python order processing module.

## Review Standards

- Flag any division by zero risks

- Flag missing input validation

- Flag any edge cases not handled

- Do not flag style issues or minor formatting

- Focus on bugs and security concerns only

## Testing Standards

- Functions should handle empty lists

- Functions should handle negative values

- Functions should handle missing dictionary keys

Now your project should look like this:

To force Claude Code to run a code review, let's modify the code in App.py. Maybe delete that refund function at the bottom and replace it with one that will definitely throw more red flags than 47 git commits only using the word "fix".

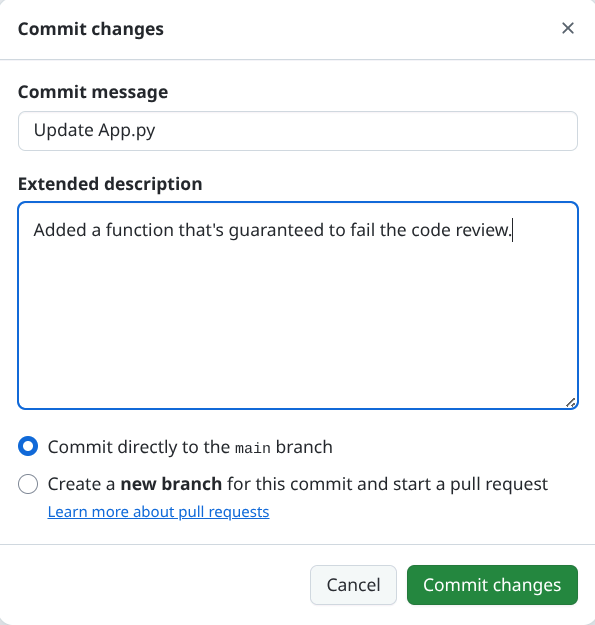

Then commit the change:

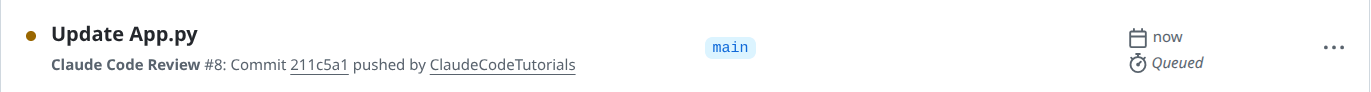

Now if you go to the Actions tab in GitHub, you'll see your job in queue to run:

And after it runs, you can see the results of the review step:

Looks Like it was Successful to Me

You're right. Even without the -p flag, GitHub had no issue performing a code review. That's because GitHub runs CI/CD pipelines in a virtual environment without an interactive terminal, so the results are the same with or without the -p flag. But not all CI/CD systems are the same and Jenkins or Azure DevOps may require the -p flag to run Claude in a non-interactive mode.

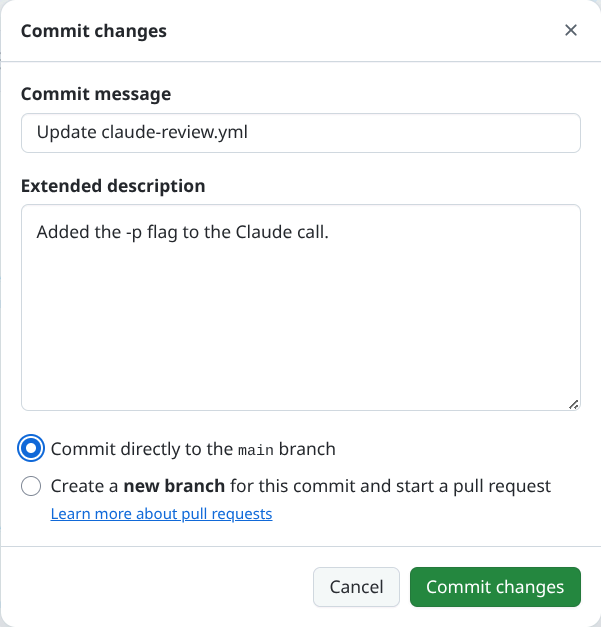

The correct way to set up your claude-review.yaml file in your repo is to add the -p flag to the claude prompt. Go in and modify that file, replacing all of the contents with the text below.

name: Claude Code Review

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

jobs:

claude-review:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v4

- name: Install Node.js

uses: actions/setup-node@v4

with:

node-version: '20'

- name: Install Claude Code

run: npm install -g @anthropic-ai/claude-code

- name: Run Claude Code Review

env:

ANTHROPIC_API_KEY: ${{ secrets.ANTHROPIC_API_KEY }}

run: |

claude -p "Review the code in app.py. Check for bugs, missing input validation, and edge cases. Be specific about line numbers." --output-format json

Notice the line claude -p "Review the code in app.py. Check for bugs, missing input validation, and edge cases. Be specific about line numbers." --output-format json now has the -p flag, which puts Claude Code in non-interactive mode for any CI/CD platform.

Now commit it.

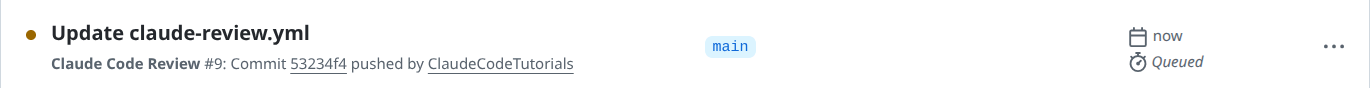

Now go into the Actions tab and wait for your job to complete.

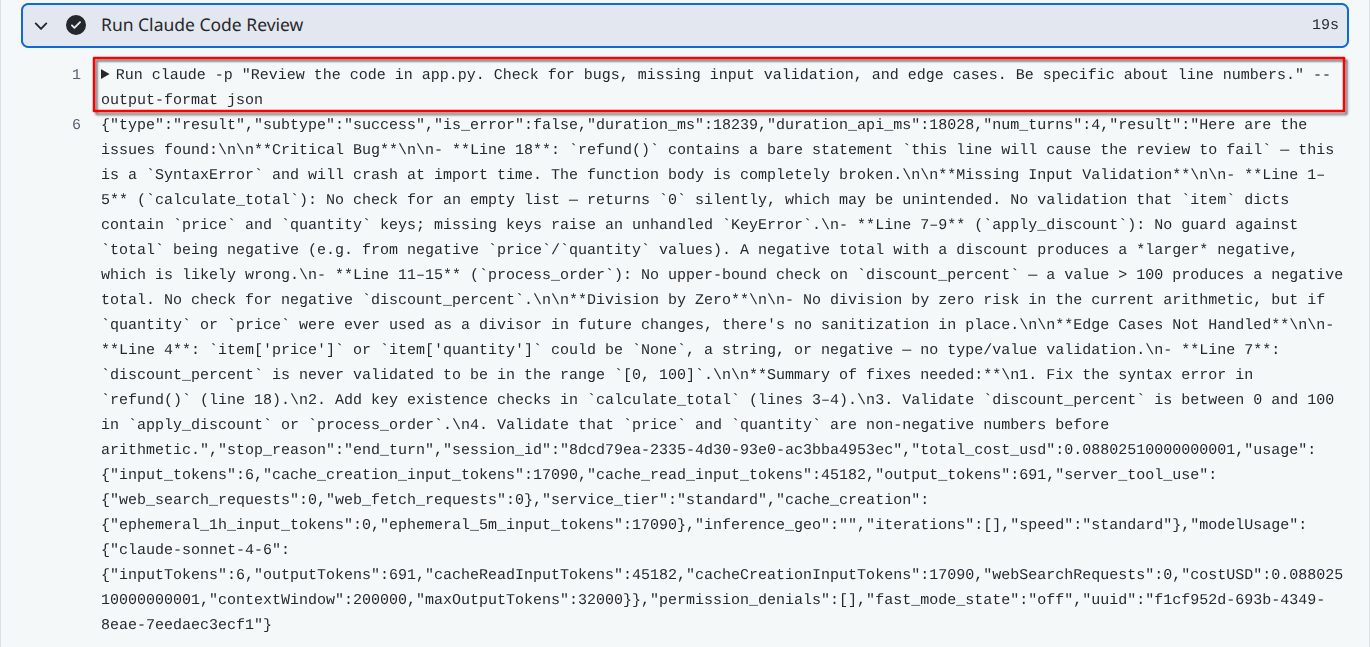

The job ran successfully, and in the Run Claude Code Review details, you can see the -p flag in the call to Claude:

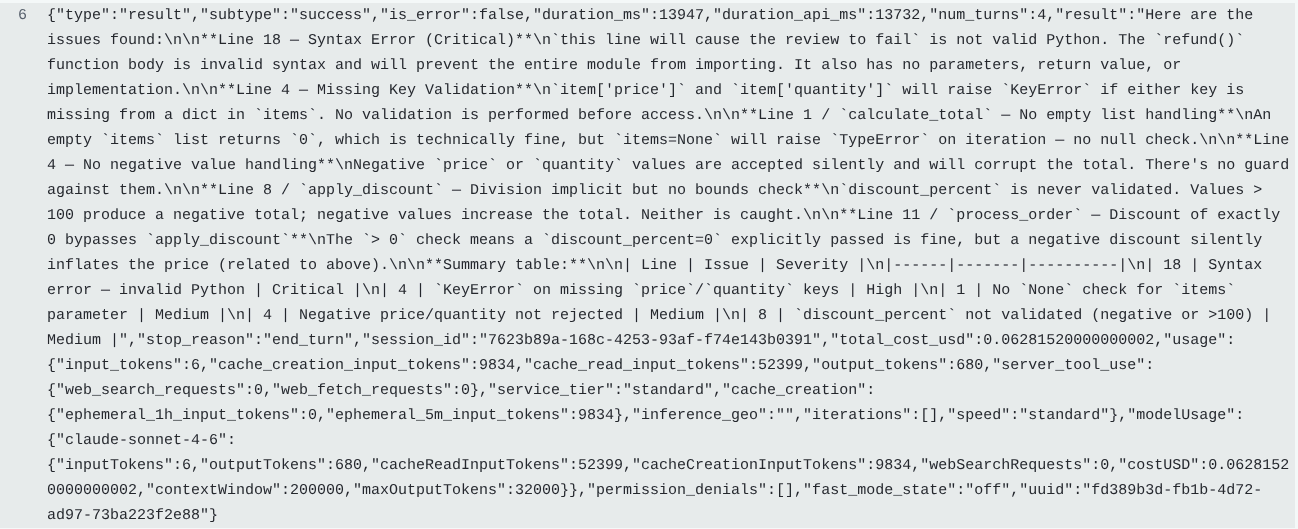

And we can see the output from the code review in JSON format. Notice all of the issues found with the code:

Session Isolation

Now that we've had Claude Code use GitHub Actions to perform a code review, let's talk for a minute about sessions and why major functions should be isolated from one another.

If you're using Claude Code to generate code, it builds up context, and that context is how it makes decisions about what to do and how to do it. That's one of the reasons AI works so well: it can remember what's been done, and analyze that information on a best-guess estimate on what should be done next.

Freshen Up

But the same benefit we get from the built-up context while creating code is a hindrance when we're trying to review code. And the reason for that is simple: there's context. Claude Code knows what it did. It knows what decisions it made. It knows why it did what it did. It can more easily rationalize away why code is written or behaves the way it does.

What you need is a fresh set of eyes. And the easiest way to do that in Claude Code is to create a new session that does not reference the code-creating session at all. With no prior reasoning context, the new Claude Code session has no biases toward your code based on past actions.

In GitHub, a new session is always created for every review step. But in larger CI/CD pipelines in other systems, you may be using the same session to create code as you are to review the code. You always want them to be isolated to remove inherent bias.

The Claude Certified Architect exam tests this concept specifically: an independent review session that does not include prior reasoning context will find more issues than self-review instructions or extended thinking.

Batch API vs Synchronous

Earlier when we were running our code review, GitHub was making calls to the Claude API, passing it the prompt to perform the actual review. Sometimes, you can, to borrow a phrase, set-it-and-forget-it. Let's explore those two options.

The Waiting is the Hardest Part

When developers commit their code and are eagerly anticipating the realization of their pre-merge, zero-bug, amazing-triumph-of-AI-engineering code review (or the agony of defeat, if that's your vibe), GitHub uses a synchronous API call. A synchronous API call blocks the pipeline until Claude Code responds to the request. There is no set-it-and-forget-it with a synchronous call. You have to wait for it to complete before any other work can be done in the pipeline. Nothing happens until results return from the API. And this is useful for a situation like this because the developer wants to fix their bug and try another code review so they can merge their change into the development branch. Remember: synchronous = wait for results, pipeline blocked until results return.

How About a Nice Batch of 50% Off?

Conversely, the Batch API exists. The Batch API is used for calls to Claude that are not time-sensitive, such as overnight report generation. Any task that can wait up to 24 hours to be processed should always use the Batch API. And Anthropic offers a 50% discount on API calls made through the Batch API because they can queue your requests and return them within 24 hours. There are no blocked pipelines. Remember: Batch API = non-time-critical work.

Preparing for the CCA exam? Start with our overview: What Is Anthropic's Claude Certified Architect Exam?